The Documentation Revolution and Its Compliance Shadow

ACROSS THE UNITED STATES — ABA practices are adopting AI-powered session note tools at an accelerating pace. A November 2024 SimplePractice survey found that 50 percent of clinicians use artificial intelligence for daily tasks including documentation. Products like RethinkBH’s Session Note AI, CentralReach’s CR NoteDraftAI, ABA Matrix, Mentalyc, BastionGPT, and Supanote are marketing HIPAA-compliant AI documentation to behavioral health providers. The promise is compelling: generate structured session notes in seconds rather than spending 30 to 60 minutes per session on manual documentation, freeing clinicians to focus on direct care.

But the compliance implications of this shift are profound and inadequately understood by most ABA practice owners. Every AI tool that creates, receives, maintains, or transmits protected health information must comply with HIPAA’s Privacy Rule, Security Rule, and Breach Notification Rule. When an AI system listens to a therapy session, transcribes behavioral observations, or generates a progress note that contains client identifiers, it is processing PHI. The legal and regulatory framework governing that processing is the same framework that governs every other electronic health record system — but the technology is fundamentally different, and the compliance requirements are correspondingly more complex.

The Behavior Analyst Certification Board has addressed this issue directly, stating that BACB certificants must be aware of risks to ensure compliance with regulatory requirements including HIPAA, ethics requirements including the Ethics Code for Behavior Analysts, and state and federal laws. The BACB specifically warns that information entered into generative AI applications may be used as learning material for the software and be exported to other users — a privacy threat that is unique to AI systems and that traditional EHR platforms do not present.

The BACB has warned explicitly that information entered into generative AI applications may be used as training data and exported to other users — a risk that transforms a documentation shortcut into a potential HIPAA violation, ethics code breach, and audit liability simultaneously.

The Business Associate Agreement: The Non-Negotiable Threshold

The most fundamental compliance requirement for any AI tool that processes PHI is a Business Associate Agreement. Under HIPAA, any third-party vendor that creates, receives, maintains, or transmits PHI on behalf of a covered entity — which includes every ABA practice that bills insurance — must sign a BAA. The BAA establishes the vendor’s legal obligations to protect PHI, including encryption requirements, access controls, breach notification procedures, and limitations on how the data can be used.

This requirement is absolute. An AI note-taking tool that does not offer a signed BAA is not HIPAA-compliant and should not be used to handle PHI, regardless of what its marketing materials claim. Terms like HIPAA-friendly, HIPAA-ready, or HIPAA-aligned are not legal designations — they are marketing language that carries no regulatory weight. The only relevant question is whether the vendor will sign a BAA that establishes enforceable legal obligations under HIPAA.

The BAA requirement extends to every link in the data processing chain. If an AI note-taking tool uses a third-party large language model — for example, OpenAI’s GPT models accessed through the consumer API — and that third party processes PHI, a BAA must exist between the AI vendor and the LLM provider as well. This creates a chain of Business Associate relationships: the ABA practice has a BAA with the AI note vendor, and the AI note vendor must have a BAA with every downstream processor that touches PHI.

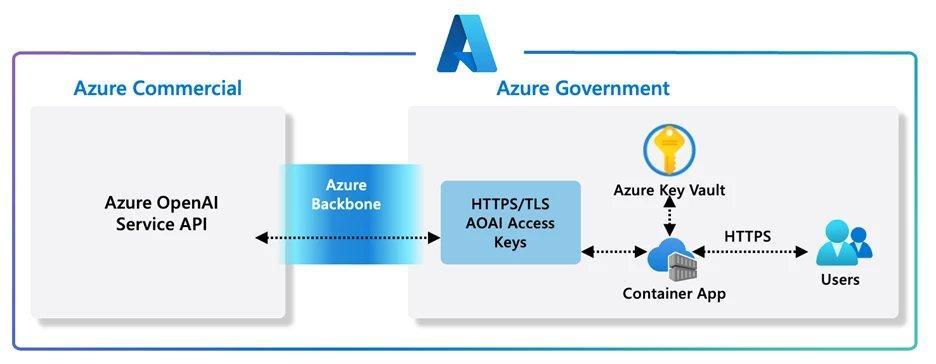

Azure OpenAI vs. consumer OpenAI: this distinction is critical for ABA practices evaluating AI tools. Microsoft’s Azure OpenAI Service is a HIPAA-eligible platform that supports BAA execution and provides enterprise-grade security controls. The consumer ChatGPT product does not meet HIPAA requirements and cannot be used with PHI. RethinkBH’s Session Note AI, for example, is built on Azure OpenAI infrastructure specifically to meet HIPAA compliance requirements. An AI vendor that uses consumer-grade LLM APIs without a BAA from the LLM provider is creating a HIPAA violation at the infrastructure level, regardless of what security measures exist at the application layer.

Microsoft Azure OpenAI Service architecture provides HIPAA-eligible AI infrastructure with enterprise-grade security controls for healthcare applications.

What HIPAA Actually Requires for AI-Generated Clinical Documentation

HIPAA’s requirements for AI systems that process PHI can be organized into three categories: the Privacy Rule, the Security Rule, and the Breach Notification Rule. Each imposes specific obligations that AI note-taking tools must meet.

Privacy Rule requirements: AI systems can only access PHI for permitted purposes. The AI tool must enforce the minimum necessary standard — accessing only the PHI needed to perform its documentation function, not retaining or using data beyond what is required for note generation. Critically, the Privacy Rule requires that PHI not be used for purposes beyond the scope of the BAA. If an AI vendor uses session data to train or improve its models, that use must be explicitly authorized in the BAA and disclosed to the practice. Many AI companies use customer data for model training by default — a practice that violates HIPAA unless specifically authorized.

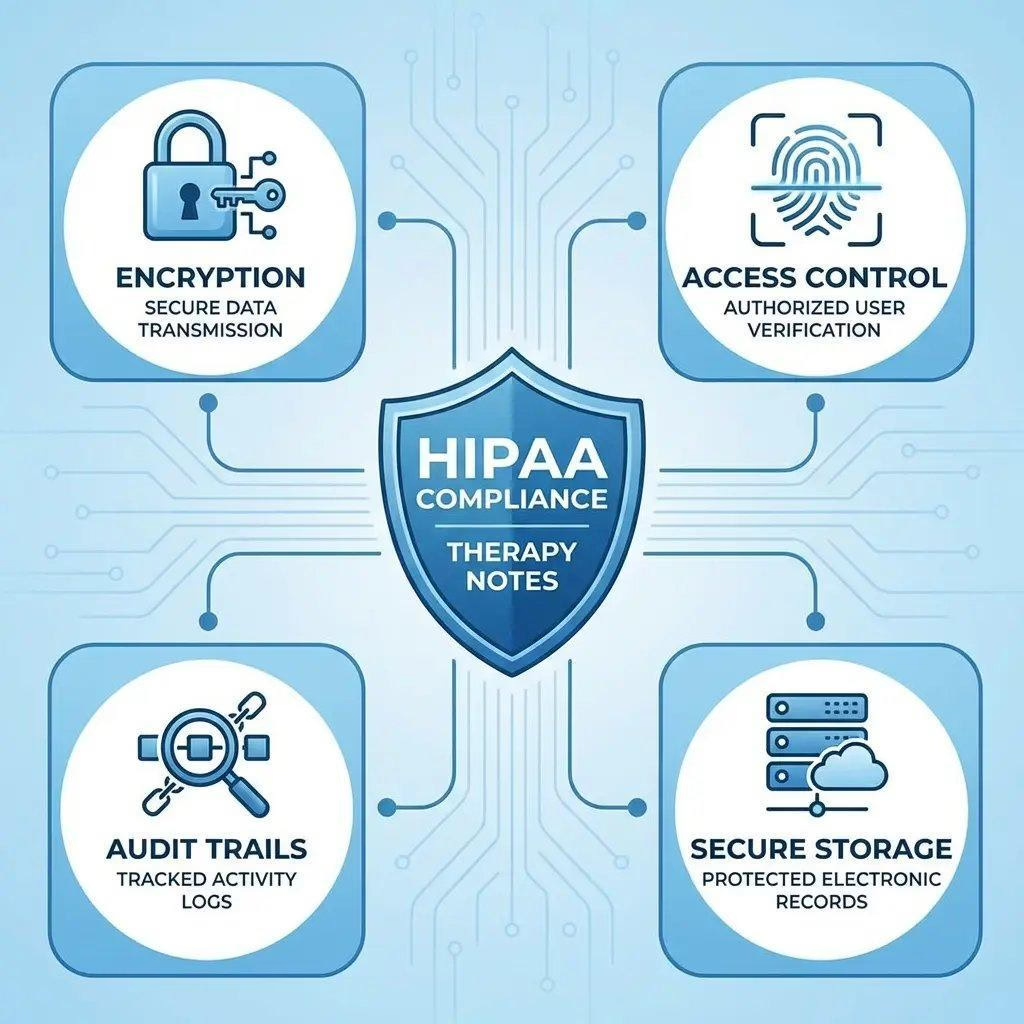

Security Rule requirements: AI systems must encrypt PHI both in transit and at rest, enforce role-based access controls, maintain audit logs of all access attempts, and implement multi-factor authentication. Data residency requirements may apply, dictating where PHI can be stored and processed geographically. The Security Rule also requires regular risk assessments, which must include the AI system and its data processing pipeline. Practices that adopt AI tools without updating their HIPAA risk assessments are in technical non-compliance.

Breach Notification Rule: if an AI system experiences an unauthorized disclosure of PHI — whether through a data breach, an AI hallucination that surfaces one client’s data in another’s notes, or a training data leak — the breach notification requirements apply. The practice must report the breach to affected individuals, HHS, and potentially the media, depending on the number of individuals affected. AI systems introduce novel breach vectors that traditional EHR platforms do not: model memorization, prompt injection attacks, and training data extraction are all categories of AI-specific security risks.

AI Hallucinations: The Clinical Accuracy Problem

Beyond HIPAA compliance, AI-generated session notes present a clinical accuracy challenge that has direct compliance and audit implications. AI hallucination — the generation of plausible but factually incorrect content — is a well-documented characteristic of large language models. In the context of ABA session notes, hallucinations could include fabricated behavioral frequencies, invented antecedent-behavior-consequence sequences, incorrect client identifiers, or progress descriptions that do not match the actual session data.

BCBAs are fully liable for all notes they sign off on, regardless of whether AI generated the initial draft. Section 2.05 of the BACB Ethics Code requires accurate record-keeping. A BCBA who signs an AI-generated note without thoroughly reviewing it against the raw session data is assuming liability for any inaccuracies the AI introduced. If those inaccuracies are discovered during a payer audit, the consequences can include denied reimbursements, recoupment demands, and potential fraud allegations.

The practical recommendation from compliance experts is clear: every AI-generated note must be reviewed against the original session data before it is finalized. This human-in-the-loop requirement reduces but does not eliminate the time savings that AI documentation provides. A note that takes 30 seconds to generate but 10 minutes to verify against session data still saves time compared to 30 minutes of manual writing — but the savings are less dramatic than AI vendors’ marketing materials typically suggest.

A BCBA who signs an AI-generated session note without verifying it against raw session data is not just risking clinical inaccuracy — they are assuming personal liability for content they did not write and may not have adequately reviewed, with potential consequences ranging from denied reimbursements to ethics code violations.

Audit Implications: What Payers and Regulators Will Look For

The adoption of AI-generated session notes introduces a new category of audit risk. Payers conducting utilization reviews or fraud investigations will eventually develop protocols for identifying and scrutinizing AI-generated documentation. The uniformity of AI-generated language, the consistency of formatting, and the potential for systematically inflated or deflated progress descriptions are all patterns that automated audit tools can detect.

Practices that use AI session note tools should document their AI use transparently. This includes recording in the client file that AI tools are used in the documentation process, specifying which AI tool is used and how it is integrated into the clinical workflow, and ensuring that the AI’s role is disclosed to clients and families as part of the informed consent process. Section 2.11 of the BACB Ethics Code requires informed consent, and the use of AI in documentation is a material fact that clients have a right to know.

From a compliance standpoint, the safest approach is to treat AI as a drafting assistant rather than a documentation system. The AI generates a first draft; the clinician reviews, edits, and finalizes the note based on their direct observation and clinical judgment. The final note is the clinician’s work product, informed by AI but not produced by AI. This framing preserves the clinician’s authorship and responsibility while capturing the efficiency benefits of AI-assisted documentation.

For ABA practice owners, the key takeaway is that AI documentation tools are not plug-and-play compliance-neutral additions to the clinical workflow. Each tool must be evaluated for HIPAA compliance, BAA availability, data processing architecture, training data policies, and clinical accuracy safeguards. The time invested in that evaluation is an investment in regulatory protection — because the consequences of getting it wrong include HIPAA penalties of up to $1.5 million per violation category per year, BACB ethics investigations, and payer recoupment demands that can threaten a practice’s financial viability.

AT A GLANCE

| Core HIPAA requirement: | Signed Business Associate Agreement (BAA) with any AI vendor processing PHI |

| HIPAA-eligible AI infrastructure: | Microsoft Azure OpenAI Service (supports BAA; used by RethinkBH Session Note AI) |

| Not HIPAA-compliant: | Consumer ChatGPT, Google Gemini, consumer Copilot — cannot be used with PHI |

| BACB warning: | Data entered into generative AI may be used as training material and exported to other users |

| Privacy Rule: | AI can only access PHI for permitted purposes; minimum necessary standard applies |

| Security Rule: | Encryption in transit and at rest; role-based access; audit logs; MFA; risk assessments |

| Breach Notification: | AI-specific risks include model memorization, prompt injection, training data extraction |

| Clinical accuracy: | AI hallucination requires human-in-the-loop review of every generated note |

| BCBA liability: | BCBAs are fully liable for all notes they sign, including AI-generated content |

| Audit risk: | Payers will develop protocols for detecting and scrutinizing AI-generated documentation patterns |

| Penalty exposure: | Up to $1.5 million per HIPAA violation category per year (HHS enforcement) |

SOURCES & REFERENCES

| 1. | BACB. Statement on Generative AI and Risks to Certificants. Behavior Analyst Certification Board. Referenced in ABA Matrix compliance guide, 2025. |

| 2. | SimplePractice. “What Therapists Must Know About HIPAA-Compliant AI Note-Taking.” November 2024 survey data. |

| 3. | RethinkBH. “Session Note AI — Automation for ABA Therapy.” Product documentation. Built on Azure OpenAI infrastructure. |

| 4. | Praxis Notes. “AI ABA Documentation Ethics: BCBA Checklist.” January 2026. |

| 5. | PMC / Jennings and Cox. “Starting the Conversation Around the Ethical Use of AI in Applied Behavior Analysis.” 2024. |

| 6. | HHS Office for Civil Rights. HIPAA Privacy Rule, Security Rule, and Breach Notification Rule. 45 CFR Parts 160 and 164. |

| 7. | ABA Matrix. “AI in Behavior Analysis and the Safe Solutions for ABA Practices.” June 2025. |

| 8. | MedCity News / David Stevens (CentralReach). “Understanding the Ethics of AI in ABA Therapy.” January 2025. |

| 9. | The Behavior Academy / Dr. David J. Cox. “Behind the Curtain of AI Tools in ABA: What Every BCBA Needs to Know.” September 2025. |

Join the discussion ▾